Saturday Hashtag: #AIDigitalAssumption

Welcome to Saturday Hashtag, a weekly place for broader context.

|

Listen To This Story

|

The artificial intelligence boom exposes a stark contrast: Adoption is accelerating with minimal risk analysis, while investors are discovering that profitability predictions were vastly overhyped.

Two emerging technologies highlight this tension.

New AI Innovations

In Beijing, 20-year-old Guo Hangjiang built MiroFish in 10 days, gaining GitHub fame and about $4 million in funding. It simulates thousands of autonomous agents with unique personalities and social ties, producing emergent behaviors that could model digital societies, markets, or elections, though its predictions remain untested.

Meanwhile, Peter Steinberger’s OpenClaw is spreading across China, automating tasks on WeChat, Slack, and email. It boasts hundreds of millions of downloads, 20 million monthly active users, and backing from Alibaba, Tencent, and ByteDance.

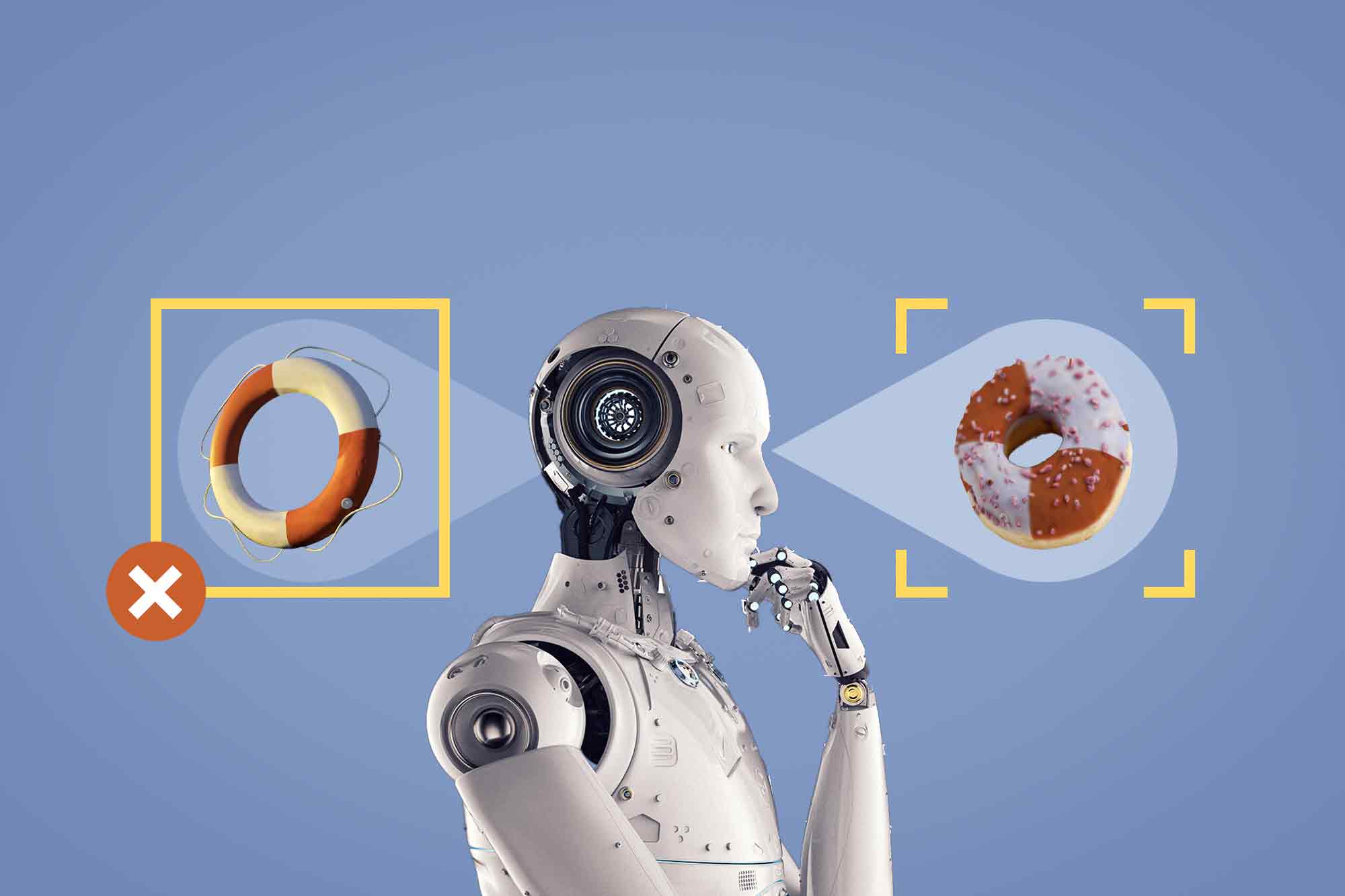

Together, they illustrate the rise of the AI digital assumption function: swarms of agents autonomously making unsupported assumptions about the world and often producing dangerous outcomes.

That autonomy requires extensive data access. MiroFish and OpenClaw rely on private messages, internal documents, proprietary business data, and behavioral logs. Greater capability demands broader access.

AI Vulnerability

This AI access-functionality matrix creates enormous systemic risk: Companies and governments are being exposed to greater digital threats, and individuals lose control over personal data.

Chinese authorities have already taken steps warning against using OpenClaw on work devices. This same unchecked tech adoption in the US has also prompted the Federal Communications Commission to ban foreign-made routers, while the Food and Drug Administration investigates Chinese-linked backdoors in medical devices already in use.

Reality vs. Hype

The AI investment boom is faltering as market hype collides with reality. Adoption costs, infrastructure demands, and energy consumption are straining companies.

BlackRock, with its massive holdings in private, unregulated loan markets, exemplifies this fragility. Its recent restrictions on investor withdrawals highlight both the vulnerability of inflated valuations and the extent of investor overconfidence in the sector.

Energy constraints amplify the risk. AI requires massive amounts of electricity. Big Tech is betting on nuclear power, but the US faces shortages in uranium, nuclear-grade welders, and regulatory bottlenecks.

Russia and China dominate uranium enrichment and reactor construction, exposing AI infrastructure to severe geopolitical risk. Microreactors are often touted as a solution, but they remain technically unproven, politically contentious, and years away from deployment.

Big Questions

MiroFish models behavior. OpenClaw executes it. Together, they simulate and act in the real world without clear guarantees of accuracy, security, or control.

This is a new computing model built on autonomous swarms, continuous data access, and vulnerable infrastructure.

The real question is no longer what AI can do, but what it can access — and who else might be watching.

Hashtag Picks

Does the AI Business Model Have a Fatal Flaw?

From Reuters: “Hundreds of billions of dollars are riding on the assumption that artificial intelligence will be reliable enough for high-stakes work. New research suggests it may never be. The AI tools that power ChatGPT and its rivals — known as large language models, or LLMs — are a genuine productivity-enhancing innovation. But they have serious shortcomings, most notably, their tendency to hallucinate, or make things up.”

Mirofish AI: Is This the Future of Swarm Intelligence or Just Hype?

The author writes, “By now, you’ve probably seen Mirofish on your feed. It’s the open-source tool that’s currently taking over GitHub by simulating human behaviour rather than predicting it. The idea is simple: build a digital environment, drop in a few AI agents, and watch them think, argue, and influence each other until an outcome emerges on its own. But is it worth the hype? Here’s everything you need to know about this trending AI tool!”

Agentic AI Exposes What We’re Doing Wrong

From InfoWorld: “We’ve spent the last decade telling ourselves that cloud computing is mostly a tool problem. Pick a provider, standardize a landing zone, automate deployments, and you’re ‘modern.’ Agentic AI makes that comforting narrative fall apart because it behaves less like a traditional application and more like a continuously operating software workforce that can plan, decide, act, and iterate.”

Agentic AI Fails in Production for Simple Reasons — What MLDS 2026 Taught Me

The author writes, “Most agentic AI failures in production are not caused by weak models, but by stale data, poor validation, lost context, and lack of governance. MLDS 2026 reinforced that enterprise‑grade agentic AI is a system design problem, requiring validation‑first agents, structural intelligence, strong observability, memory discipline, and cost‑aware orchestration — not just bigger LLMs. I recently attended MLDS 2026 (Machine Learning Developer Summit) by Analytics India Magazine (AIM) in Bangalore. While many sessions featured advanced models and agentic frameworks, the most valuable insight was unexpected.”

Matt Shumer’s Viral Blog About AI’s Looming Impact on Knowledge Workers Is Based on Flawed Assumptions

From Forbes: “AI Influencer Matt Shumer penned a viral blog on X about AI’s potential to disrupt, and ultimately automate, almost all knowledge work that has racked up more than 55 million views in the past 24 hours. Shumer’s 5,000-word essay certainly hit a nerve. Written in a breathless tone, the blog is constructed as a warning to friends and family about how their jobs are about to be radically upended. … But despite its viral nature, Shumer’s assertion that what’s happened with coding is a prequel for what will happen in other fields — and, critically, that this will happen within just a few years — seems wrong.”

‘A Feedback Loop With No Brake’: How an AI Doomsday Report Shook US Markets

From The Guardian: “US stock markets have been hit by a further wave of AI jitters, this time from yet another viral — and completely speculative — warning about the impact of the technology on the world’s largest economy. The latest foreboding is from Citrini Research, a little-known US firm that provides insights on ‘transformative “megatrends.”’ Its post on Substack, which it called a ‘scenario, not a prediction,’ rattled investors by portraying a near future in which autonomous AI systems — or agents — upend the entire US economy, from jobs to markets and mortgages.”

A Systematic Taxonomy of Security Vulnerabilities in the OpenClaw AI Agent Framework

The authors write, “The deployment of large language models as autonomous agents — systems that perceive external input, reason over it, and produce actions with real-world effects — introduces a qualitatively different security problem from that of conventional application software. In a traditional application, the code determines behavior; an attacker who cannot modify the code is largely confined to exploiting memory safety errors or logic bugs in well-defined input handlers. In an AI agent framework, the model’s output is itself a control signal: a tool call emitted by the model instructs the runtime to execute a shell command, read or write a file, navigate a browser, or deliver a message across a messaging platform. The attack surface therefore includes not only the runtime’s implementation correctness but also the model’s susceptibility to adversarial influence through any data path that reaches its context window.”